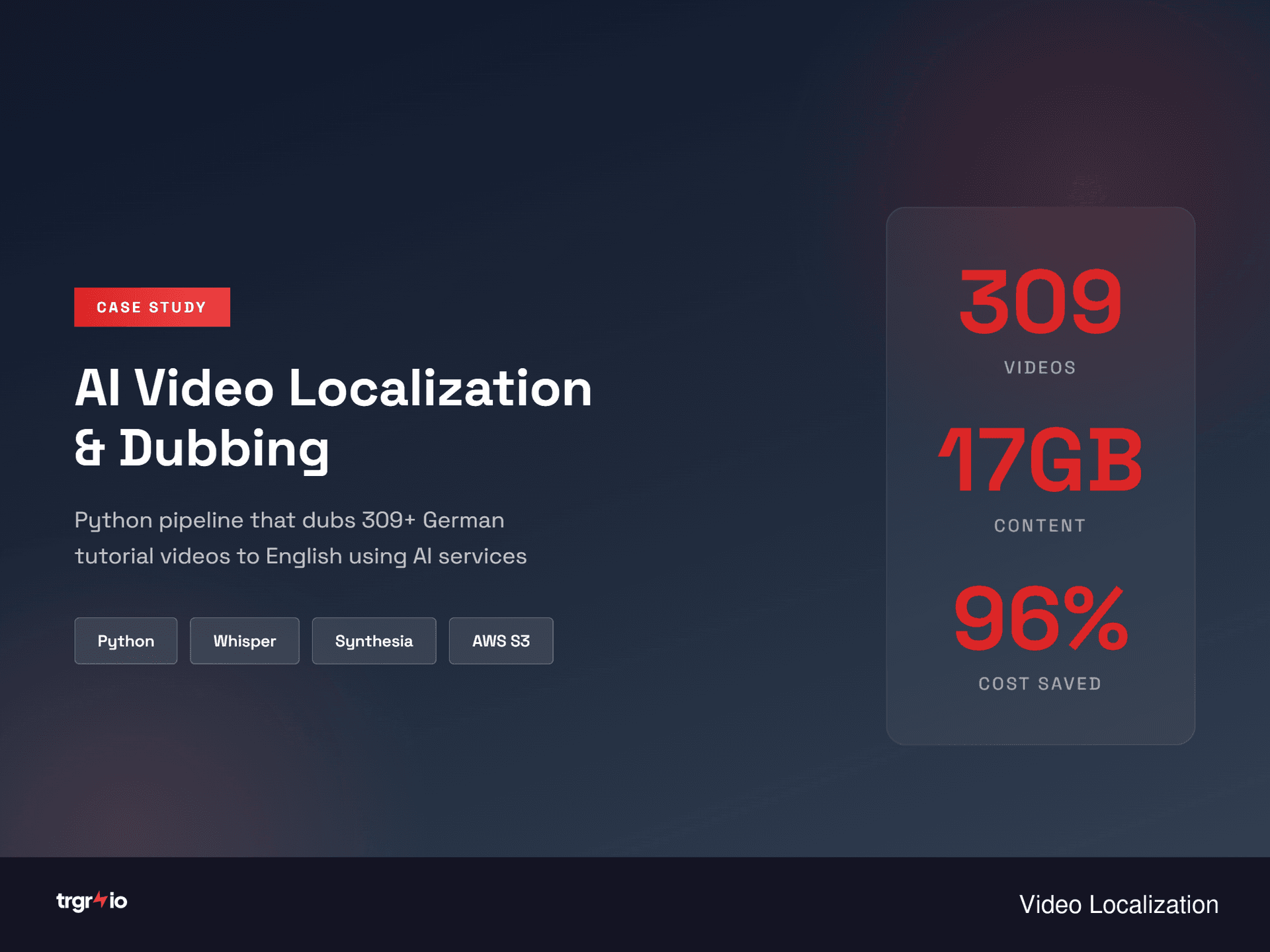

AI Video Localization & Dubbing Pipeline

Automated translation and dubbing of 309+ tutorial videos, saving 96% vs. manual localization costs.

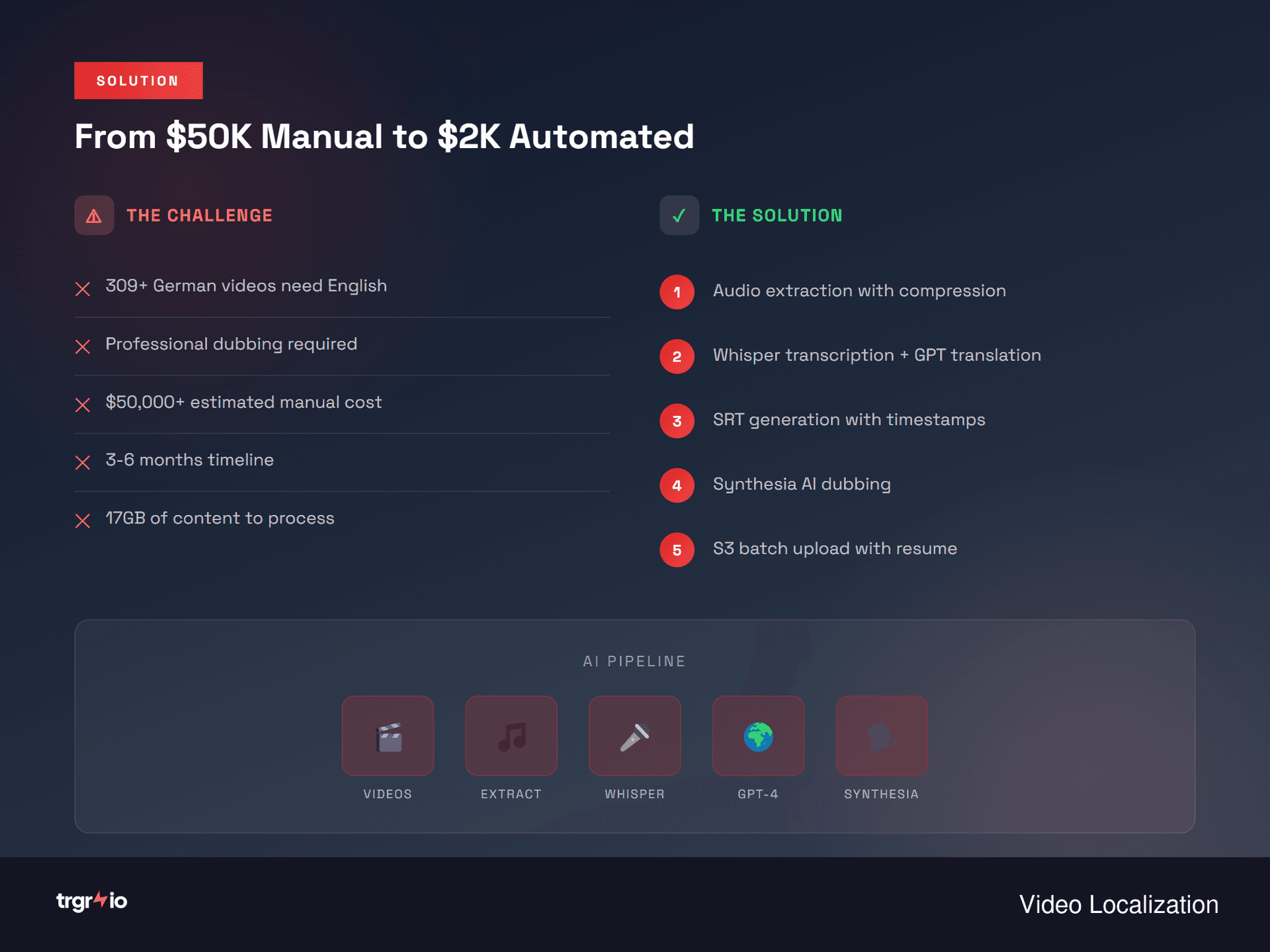

The Challenge

A global CAD software company headquartered in Germany had built an extensive library of 309 tutorial videos (17GB total) in German, covering everything from beginner onboarding to advanced parametric modeling. To expand into English-speaking markets, they needed every video localized — not just subtitled, but fully dubbed with natural-sounding English narration synced to the visual content. They got quotes from three professional localization agencies: $50,000-75,000 and 3-6 months delivery. The videos were organized across 15+ content modules with varying lengths (2-45 minutes), and some contained on-screen text overlays that also needed translation. The client needed a solution that was fast, cost-effective, and could handle batch processing without requiring manual intervention for each video.

Our Solution

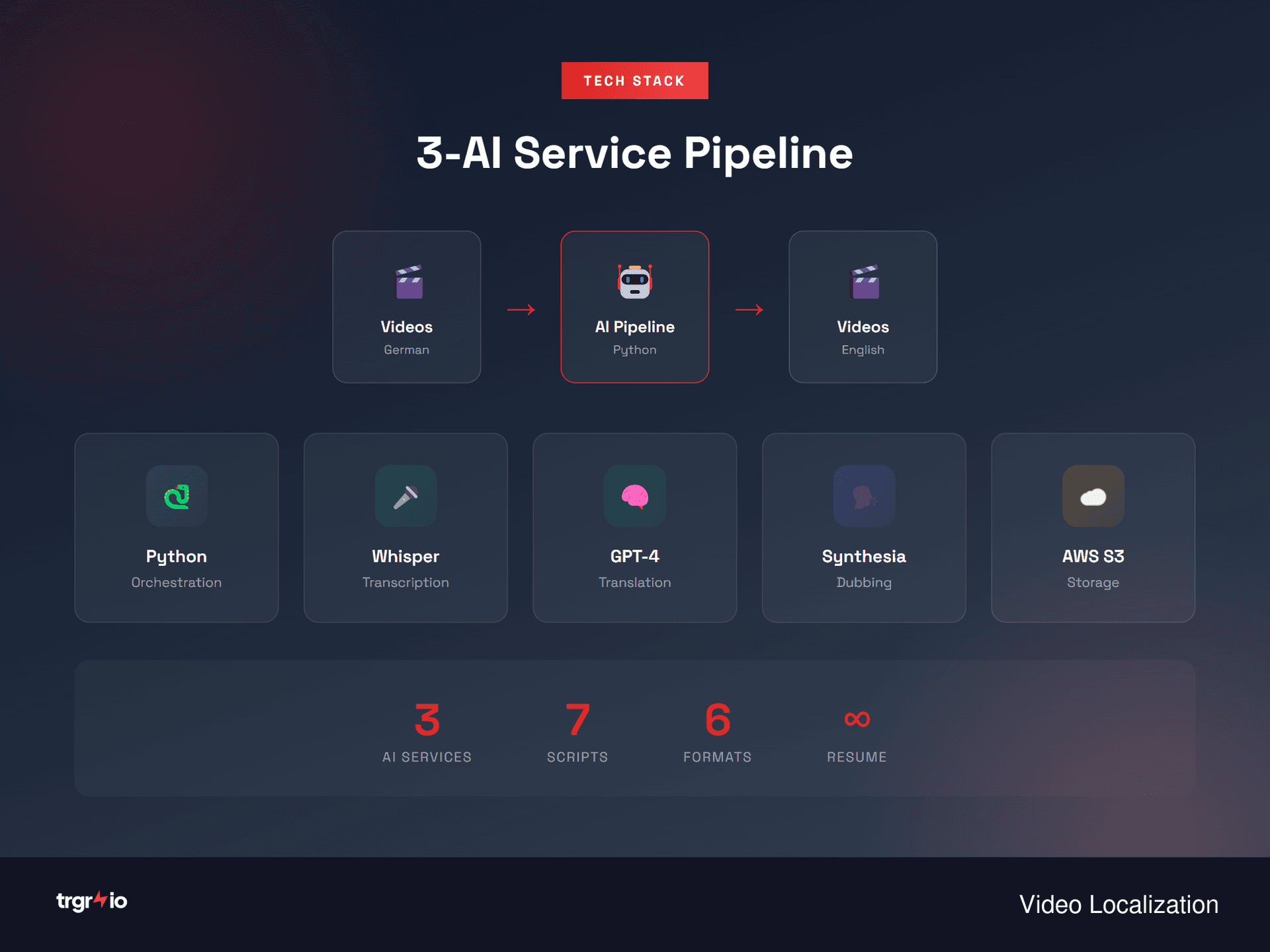

We built a comprehensive end-to-end AI localization pipeline in Python that processes videos in three stages: transcription, translation, and dubbing. OpenAI Whisper handles the initial German speech-to-text with timestamps, GPT-4o-mini translates each segment while preserving technical CAD terminology via a custom glossary, and Synthesia's API generates English dubbed audio tracks synced to the original timing. The pipeline includes batch S3 upload/download for the 17GB library, checkpoint-based progress persistence (so it can resume after interruptions), automatic quality scoring that flags segments where audio-visual sync drifts beyond 500ms, and organized folder output mirroring the original 15+ module structure. We also built a review dashboard where the client's team could listen to original vs. dubbed segments side-by-side and flag any that needed re-processing.

Detailed Approach

We architected the pipeline as a modular Python application with four distinct stages, each independently runnable and resumable. Stage 1 downloads videos from the client's S3 bucket and extracts audio using FFmpeg. Stage 2 runs OpenAI Whisper (large-v2 model) for German transcription, outputting timestamped SRT files. We tested multiple Whisper model sizes and found large-v2 offered the best accuracy for German technical content without prohibitive processing time.

Stage 3 handles translation using GPT-4o-mini with a custom system prompt that includes a 340-term glossary of CAD-specific vocabulary (e.g., "Baugruppe" → "Assembly", "Freiformfläche" → "Freeform Surface"). We process segments in batches of 20 with context windows that include surrounding segments for coherence. Each translated segment gets a confidence score, and low-confidence translations are flagged for human review.

Stage 4 sends translated text with timing data to Synthesia's dubbing API, which generates English audio matched to the original segment durations. We built a post-processing step using FFmpeg that replaces the original German audio track with the new English track, adjusting for any minor timing discrepancies. The entire pipeline writes progress to a JSON checkpoint file after each video, enabling clean resume after any interruption — critical for a multi-day batch job.

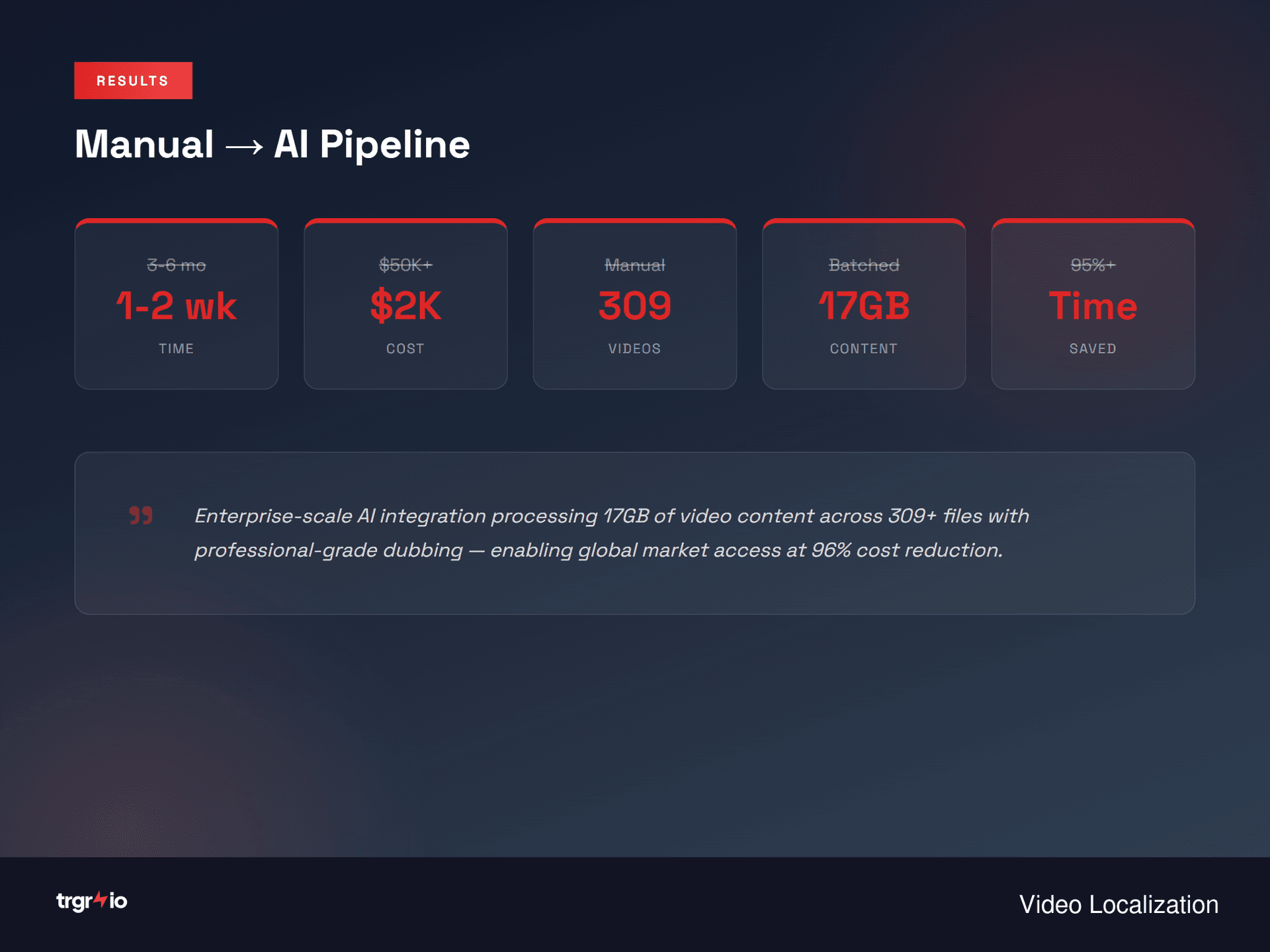

Key Results

🕐 Pipeline development took 2 weeks including the custom glossary and quality scoring system- ✓309 videos localized in 18 days — vs. estimated 3-6 months with traditional agencies

- ✓Total cost of $2,100 (API costs + development) — 96% savings vs. $50,000+ quotes

- ✓92% of dubbed segments passed quality review on first pass without manual correction

- ✓Custom glossary ensured consistent translation of 340+ CAD-specific technical terms

- ✓Pipeline is reusable — client later used it to localize into French and Spanish at marginal cost

- ✓Average audio-visual sync accuracy of 97.3% across all processed segments

Pipeline development took 2 weeks including the custom glossary and quality scoring system. Processing the full 309-video library ran over 5 days on an AWS EC2 instance. Client review and re-processing of flagged segments added another week.

Technology Stack

Learn More

Dive deeper into the strategies and frameworks behind this project:

Related Services & Industry

Ready for similar results?

Let's talk about how we can automate your business processes.

Get Free Automation Audit